ATENCION los tutoriales y lecciones que muestra CrowPi2 están **EN INGLÉS**

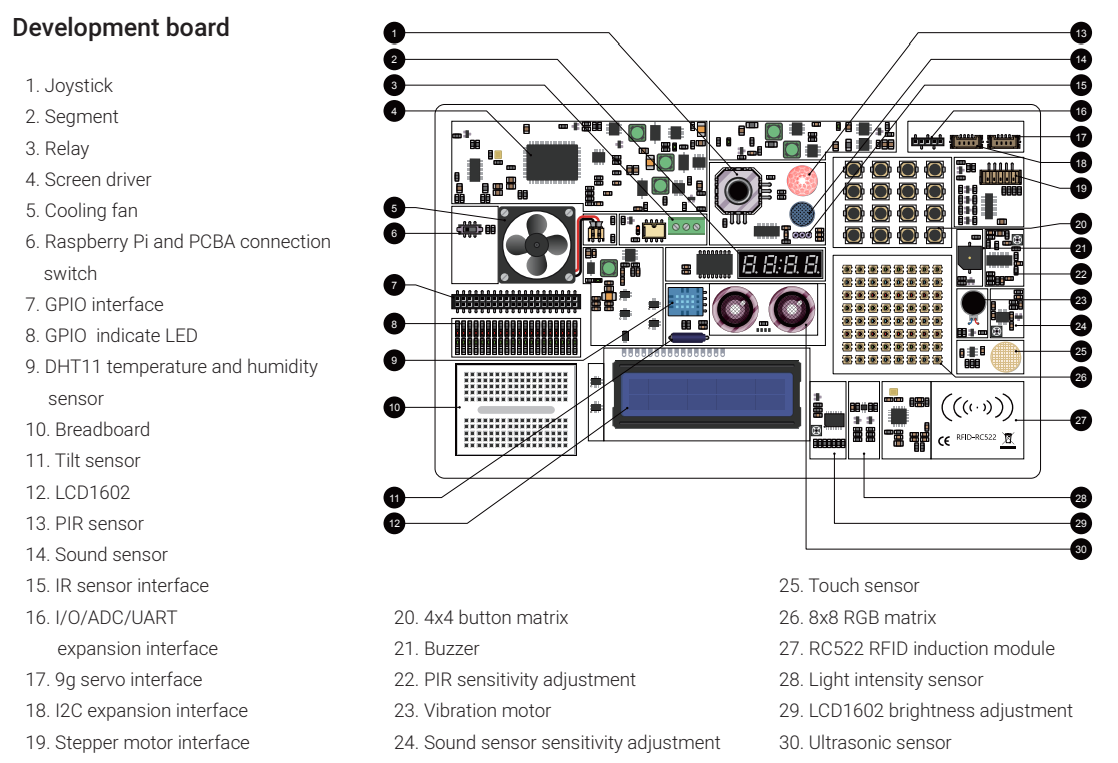

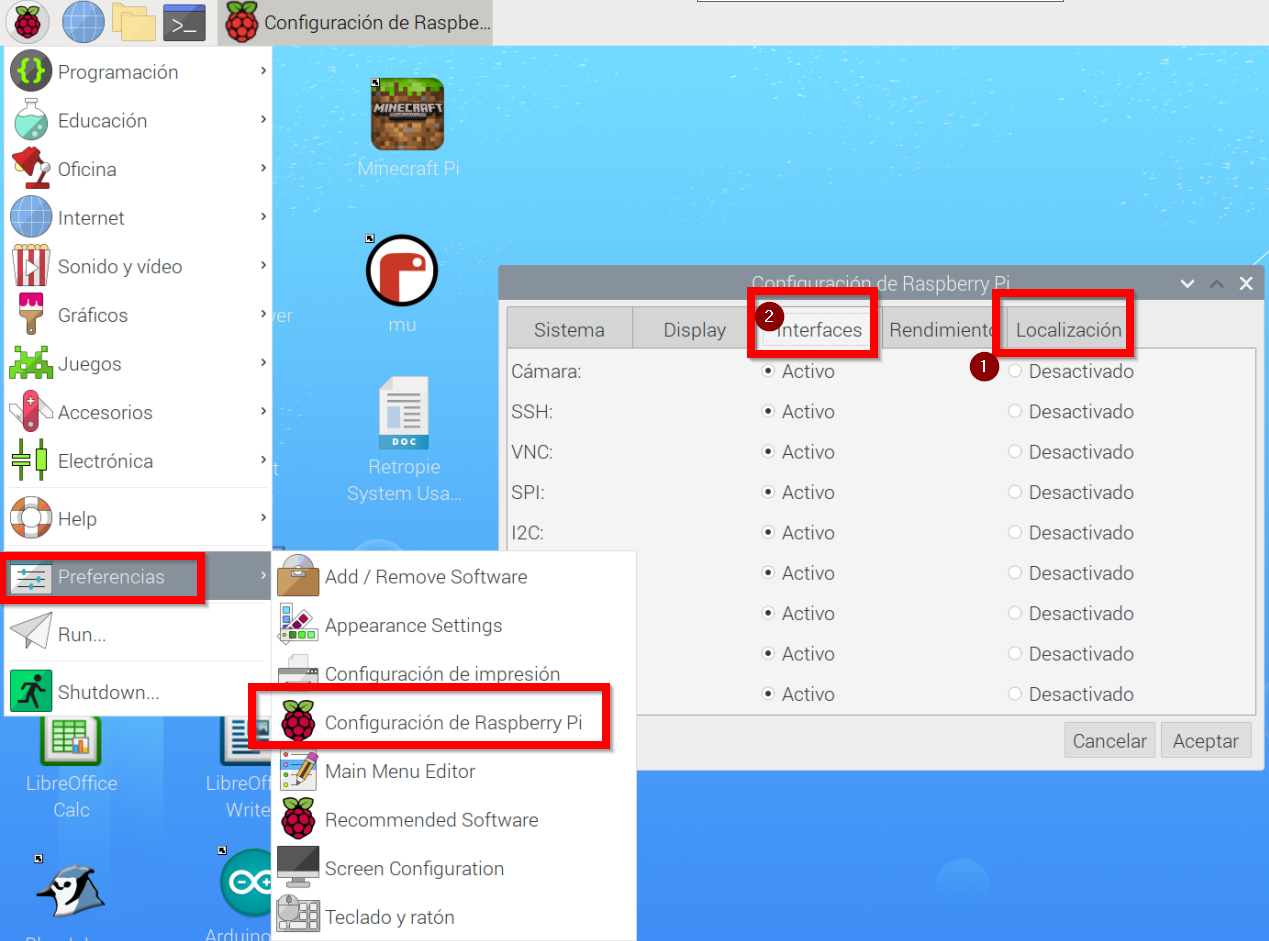

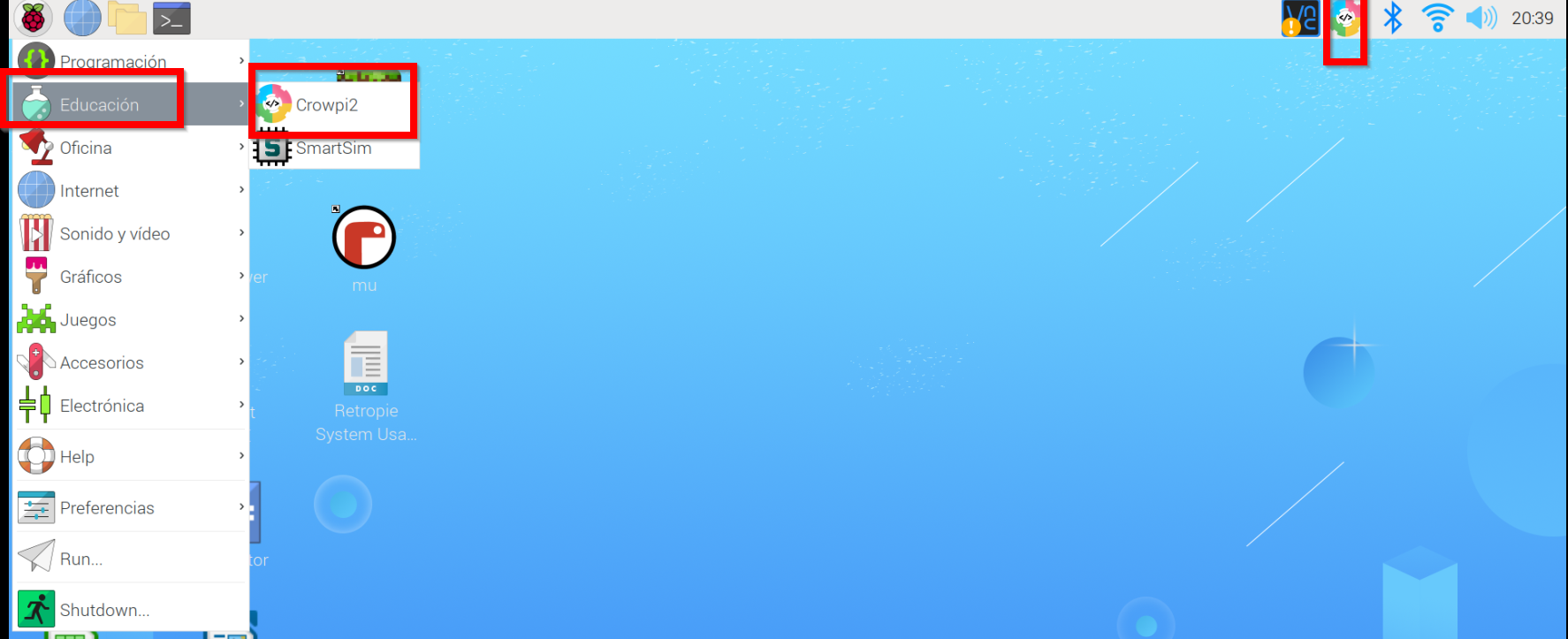

### **¿Qué es?** Crowpi2 es básicamente un ordenador adaptado para robótica pero que el microprocesador es una Raspberry. Es decir, tiene teclado, pantalla, alimentación... y un sinfín de sensores y actuadores para realizar experimentos con la Raspberry Pi [](https://libros.catedu.es/uploads/images/gallery/2024-12/crowpi2sliver-4-5.webp)Fuente [https://www.crowpi.cc/](https://www.crowpi.cc/) A la hora de comprar hay que tener en cuenta de pedir teclado español y que no suele incluir la Raspbery. Sale por unos [365€](https://robotopia.es/kits-educativos/300-90-crowpi2.html#/52-opciones_crowpi2-con_raspberry_pi_4gb) Los sensores que lleva integrados son [](https://libros.catedu.es/uploads/images/gallery/2024-12/2024-12-20-19-40-01-crowpi2-rasspberry-pi-laptop-user-manual.png) Fuente Manual CrowPi2 descargable [aquí](https://www.manualslib.com/download/3143192/Elecrow-Crowpi2.html) Para ver en qué pin GPIO esta conectado cada sensor y actuador ver [https://github.com/Elecrow-RD/CrowPi2](https://github.com/Elecrow-RD/CrowPi2) Si se quiere utilizar la placa board (10) con los pines GPIO diréctamente, poner el switch (6) en OFF, en caso contrario dejarlo en ON para poder usar los elementos de Crowpi ### **Configuración hardware** Conectamos nuestra Raspberry Pi en el Crowpi tal y como dicen las instrucciones, sobre todo hay que fijarse en conectar la alimentación, y display. Manual CrowPi2 descargable [aquí](https://www.manualslib.com/download/3143192/Elecrow-Crowpi2.html). ### **Configuración software** Tenemos que bajar la imagen oficial, que es un Raspbian con programas educativos, sobre todo el Crowpi2 que hablaremos más adelante. Para descargar la imagen, [aquí tienes la página oficial. ](https://www.crowpi.cc/blogs/news/how-to-update-the-crowpi2-os-image)Para grabarla en una tarjeta SD (recomendable 32G) podemos usar [balenaetcher](https://etcher.balena.io/) Una vez instalado, arrancar Crowpi2 con la tarjeta, configurar teclado, wifi (1 en la figura) y recomendamos activar SSH y VNC y cámara web (2) para poder manejar Crowpi2 desde otro ordenador. [](https://libros.catedu.es/uploads/images/gallery/2024-12/2024-12-20-20-41-31-192-168-1-46-raspberrypi-realvnc-viewer.png) luego en el terminal, recomendamos actualizar el software con las instrucciones: ``` sudo apt-get update sudo apt-get dist-upgrade ``` ### **Programa educativo Crowpi** Está preinstalado en la imagen oficial de Crowpi2 y lo tenemos accesible en estos dos sitios [](https://libros.catedu.es/uploads/images/gallery/2024-12/2024-12-20-20-39-16-192-168-1-46-raspberrypi-realvnc-viewer.png) Al arrancar sale esta ventana [](https://libros.catedu.es/uploads/images/gallery/2024-12/2024-12-20-21-06-47-192-168-1-46-raspberrypi-realvnc-viewer.png) ### **Programa educativo Crowpi Learning** En el momento de arrancar este programa nos encontramos con un diálogo de logueo. Se puede crear usuarios sin necesidad de cofirmación ni Internet (email, etc..) perfecto para alumnos menores de edad.Esto es excelente pues nos permite usar el mismo Crowpi2 para distintos alumnos y cada uno va a su ritmo pues graba las lecciones que se han logrado

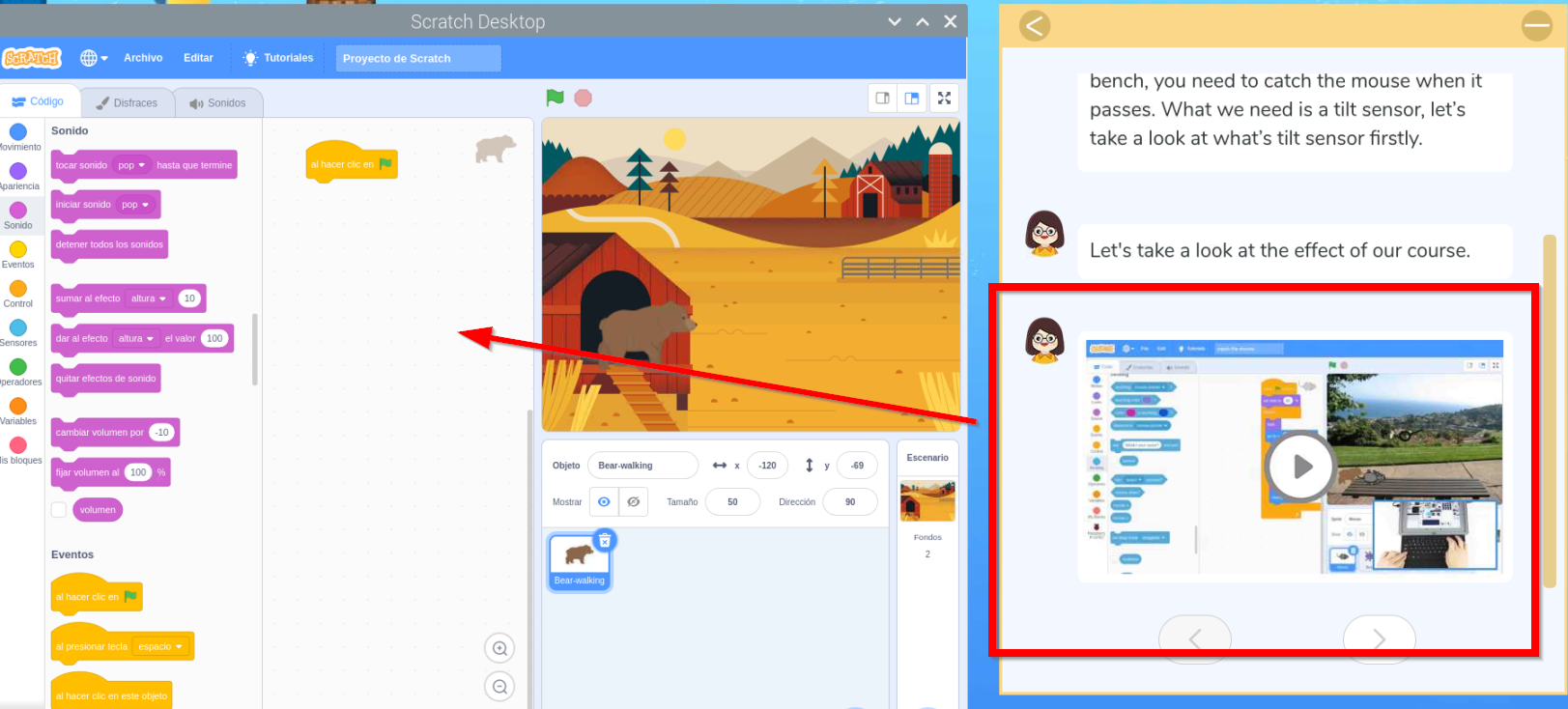

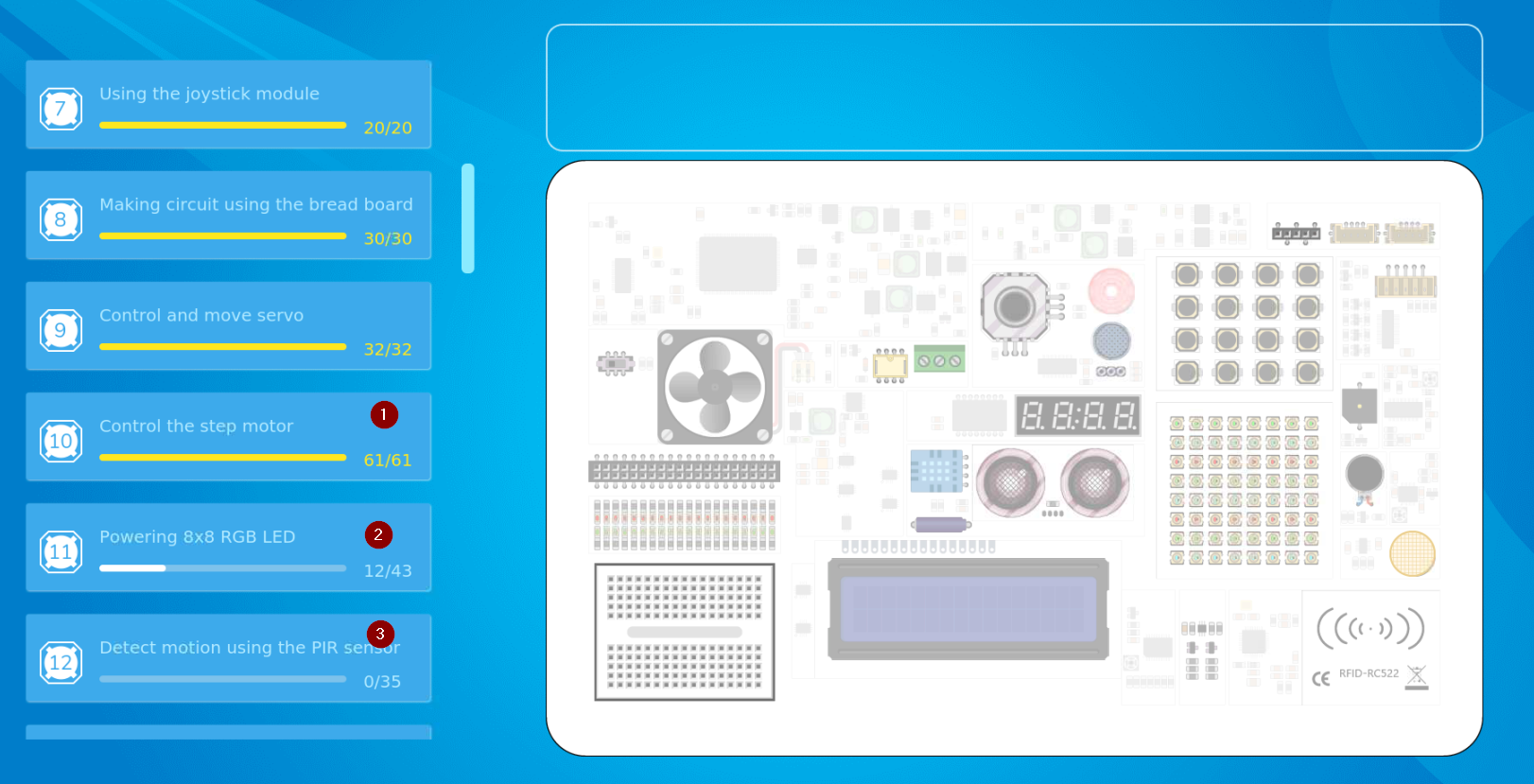

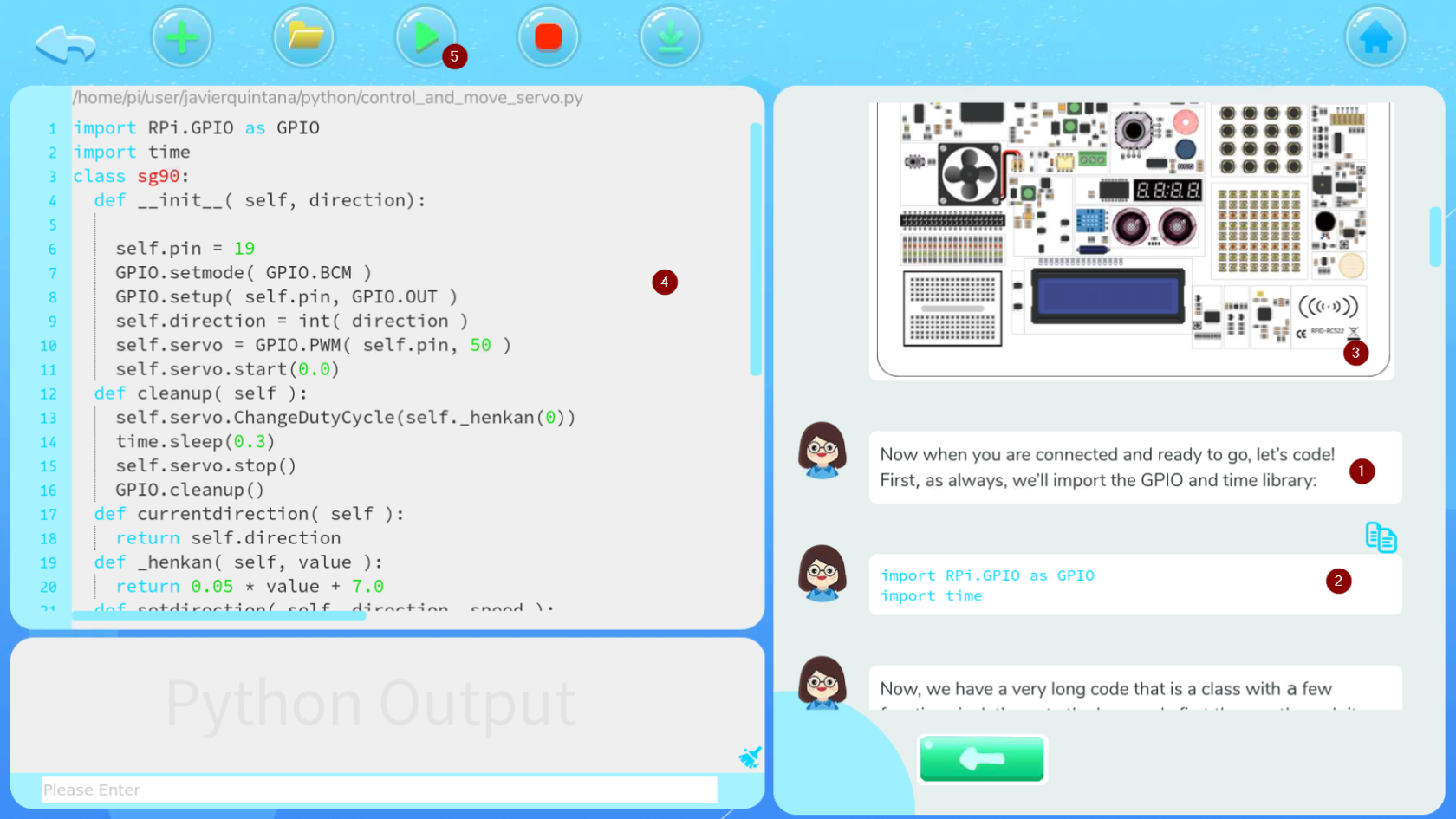

[](https://libros.catedu.es/uploads/images/gallery/2024-12/2024-12-20-21-03-22-192-168-1-46-raspberrypi-realvnc-viewer.png) Al loguearse nos pregunta qué tipo de programación deseamo [](https://libros.catedu.es/uploads/images/gallery/2024-12/2024-12-20-21-14-31-192-168-1-46-raspberrypi-realvnc-viewer.png) ### **Programa educativo Crowpi Learning Scratch** Nos enseña 16 lecciones, qué lecciones son las que hemos hecho (1), por cual vamos (2) y cuales nos quedan por hacer. Hasta que no se completa una lección no permite pasar a la siguiente. [](https://libros.catedu.es/uploads/images/gallery/2024-12/2024-12-20-21-16-29-192-168-1-46-raspberrypi-realvnc-viewer.png) Las lecciones enseñan paso a paso las instrucciones con vídeos para poder hacer los programas y el editor Scratch para ir realizándolo : [](https://libros.catedu.es/uploads/images/gallery/2024-12/2024-12-20-21-22-21-192-168-1-46-raspberrypi-realvnc-viewer.png) ### **Programa educativo Crowpi Learning Python** Nos enseña 32 lecciones, qué lecciones son las que hemos hecho (1), por cual vamos (2) y cuales nos quedan por hacer. Hasta que no se completa una lección no permite pasar a la siguiente. [](https://libros.catedu.es/uploads/images/gallery/2024-12/2024-12-20-21-24-16-192-168-1-46-raspberrypi-realvnc-viewer.png) En las lecciones (1) se explica paso a paso el código a realizar (2) junto con explicaciones de los sensores (3) y al lado el editor Thomy (4) para ir realizando el programa y poder ejecutarlo (5) [](https://libros.catedu.es/uploads/images/gallery/2024-12/2024-12-20-21-26-50-192-168-1-46-raspberrypi-realvnc-viewer.png)Para el manejo del 8x8 RGB LED Matriz de necesita esta librería y da error from rpi\_ws281x import PixelStrip, Color si sabes cómo solucionar este problema, ponte en contacto con Catedu [www.catedu.es](https://www.catedu.es) - información

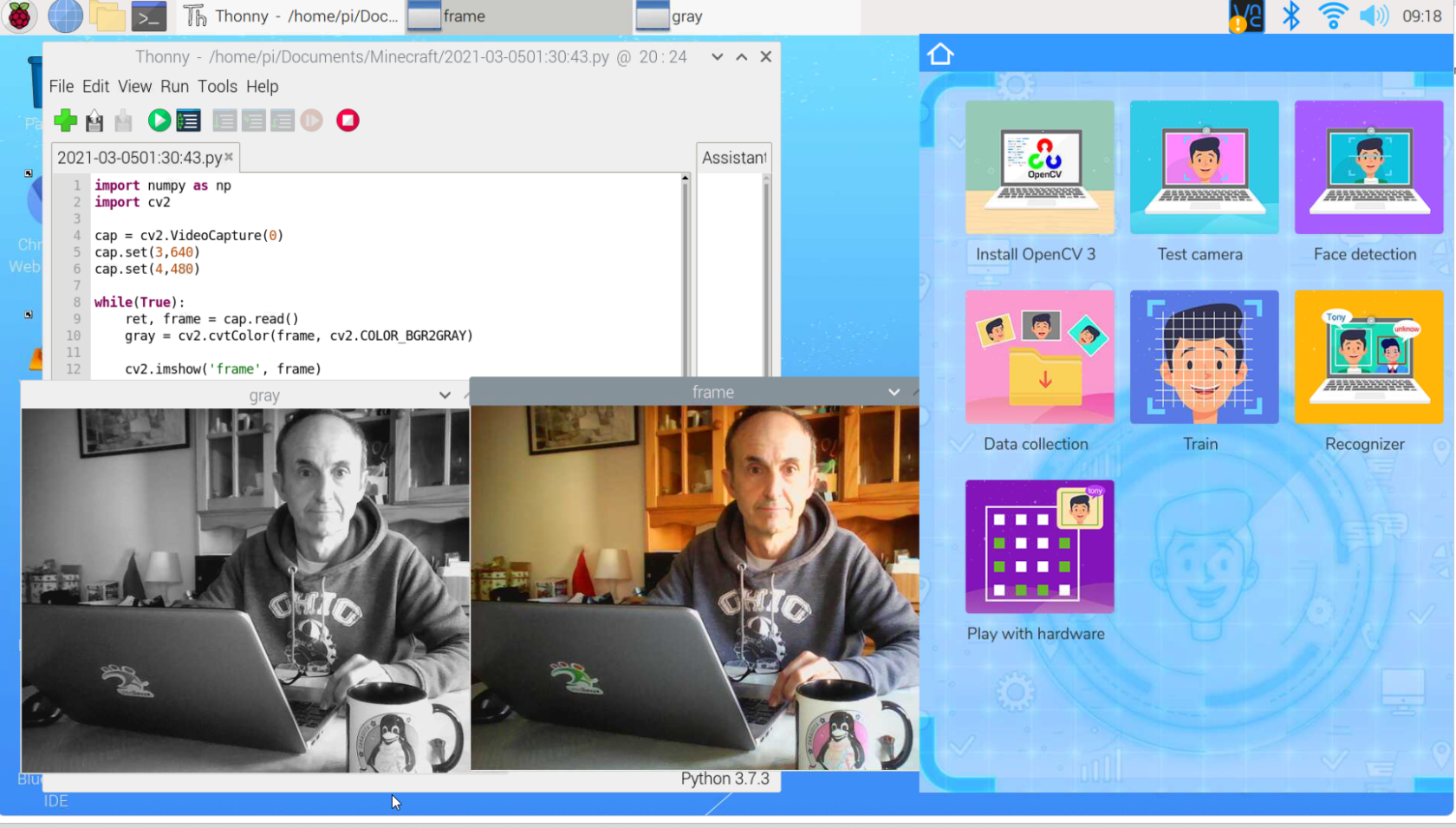

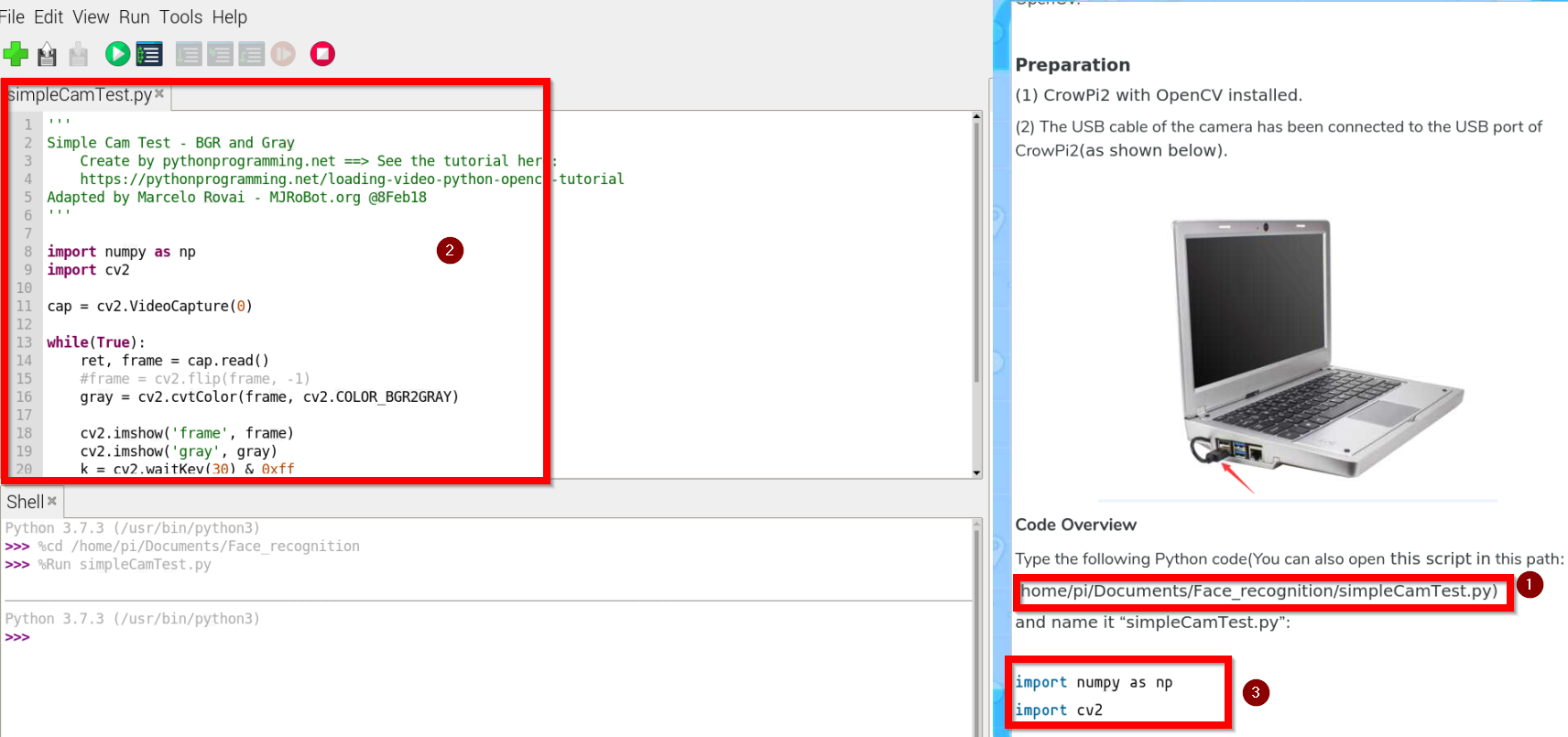

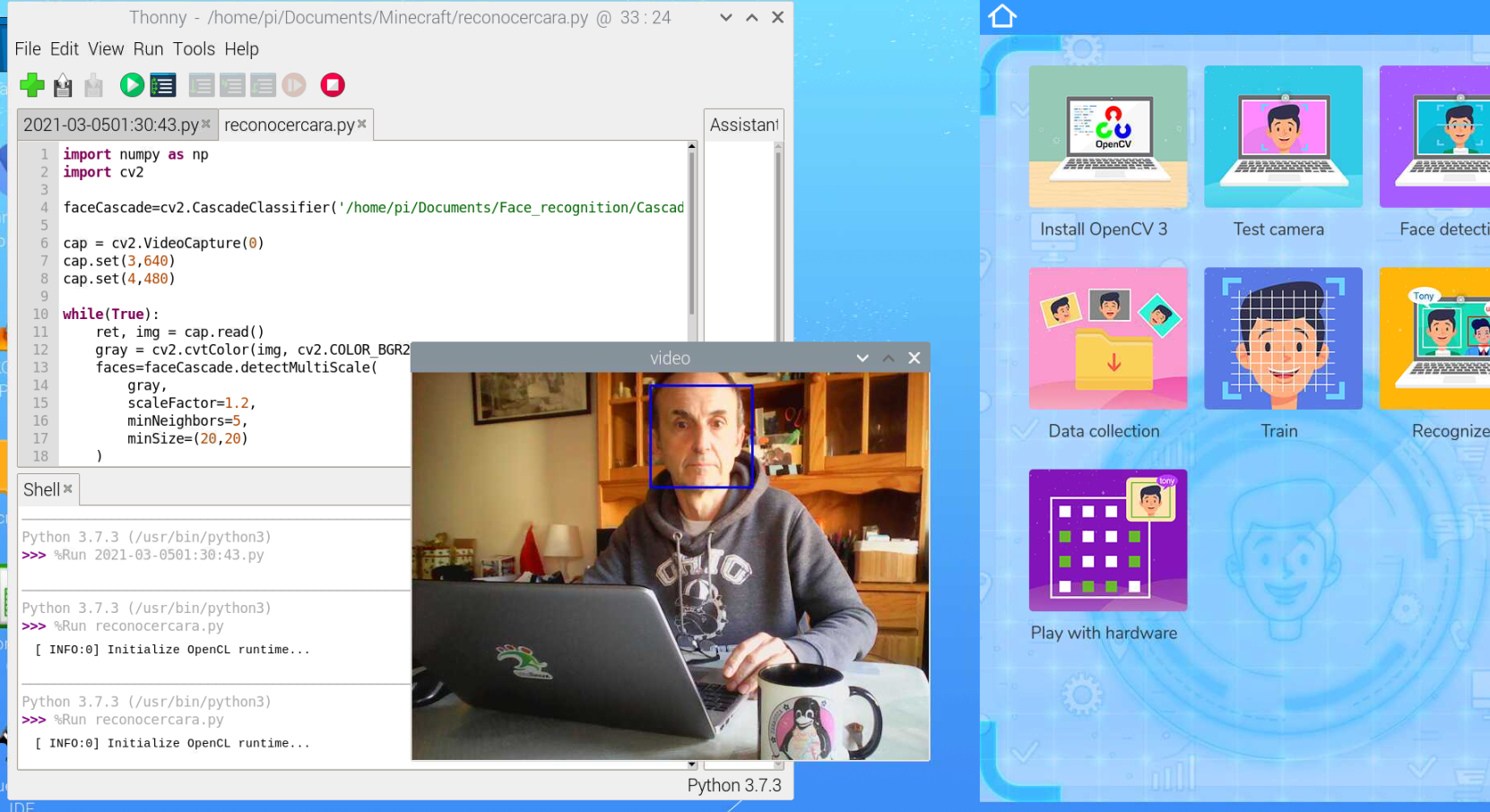

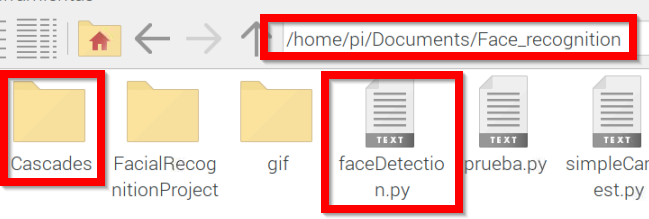

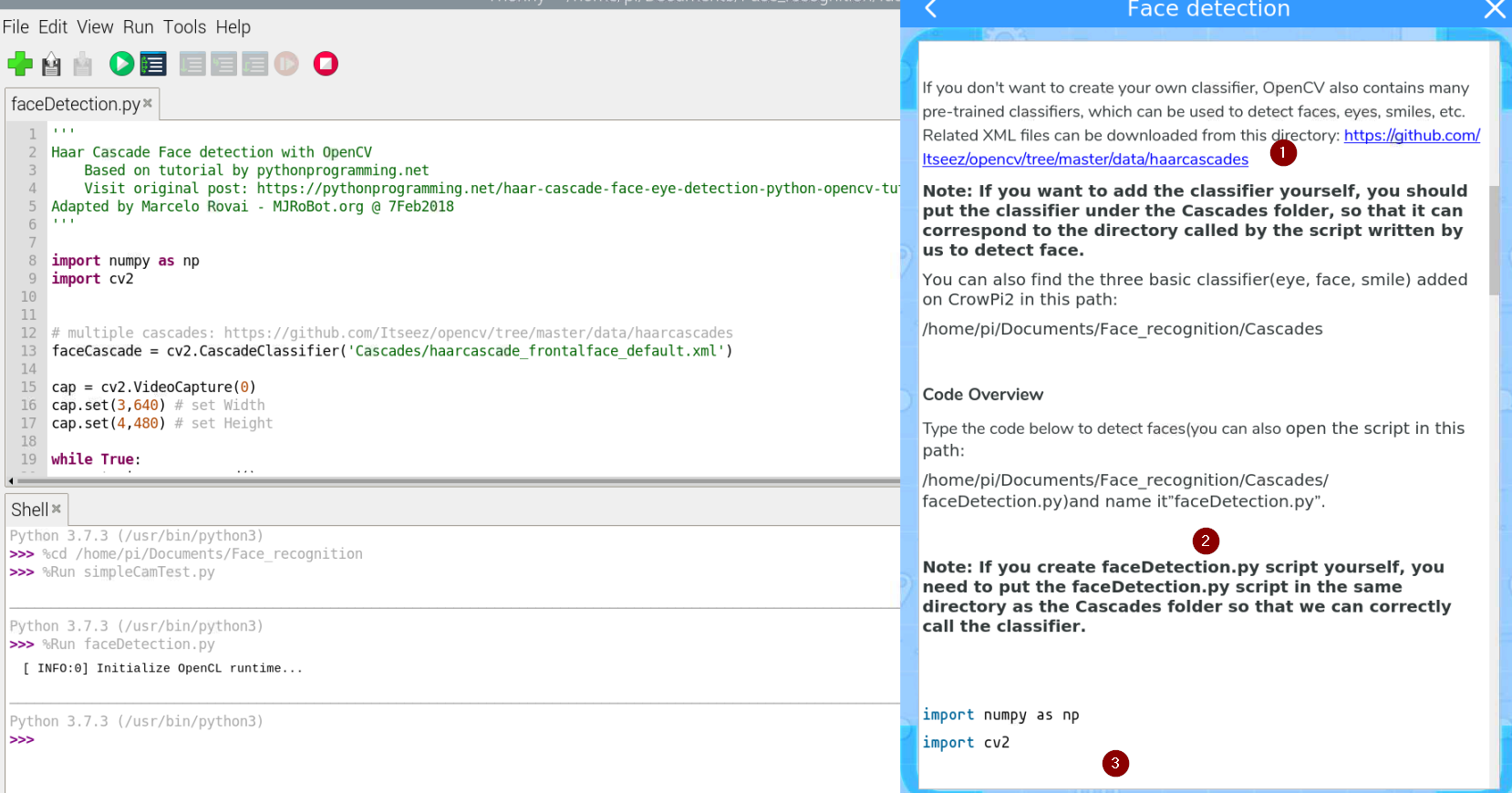

### **Programa educativo Crowpi AI** **[ ](https://libros.catedu.es/uploads/images/gallery/2024-12/2024-12-21-09-10-47-192-168-1-46-raspberrypi-realvnc-viewer.png)[](https://libros.catedu.es/uploads/images/gallery/2024-12/2024-12-21-09-11-35-192-168-1-46-raspberrypi-realvnc-viewer.png)** ##### **Programa educativo Crowpi AI Speech Recognition** Se basa en el software y máquina de entrenar [https://snowboy.kitt.ai/](https://snowboy.kitt.ai/) pero como puedes ver está ya sin mantenimiento luego las lecciones que enseña Crowpi Learning no sirven. ##### **Programa educativo Crowpi AI- Face Recognition- instalación Open CV3** Para utilizar el reconocimiento de imagen, tenemos que utilizar el software OpenCV3 **no utilizar la guía que muestra Install Open CV3, está obsoleta** simplemente en un terminal ejecutar la instrucción ``` sudo apt install python3-opencv ``` ##### **Programa educativo Crowpi AI- Face Recognition- Test de la cámara** Vamos a probarlo con este programa que visualiza capturas en gris y en color ``` import numpy as np import cv2 cap = cv2.VideoCapture(0) cap.set(3,640) cap.set(4,480) while(True): ret, frame = cap.read() gray = cv2.cvtColor(frame, cv2.COLOR_BGR2GRAY) cv2.imshow('frame', frame) cv2.imshow('gray', gray) k=cv2.waitKey(30) & 0xff if k == 27: break cap.release() cv2.destroyAllWindows() ``` Extraído de [https://peppe8o.com/crowpi2-reviewing-the-famous-all-in-one-stem-solution/](https://peppe8o.com/crowpi2-reviewing-the-famous-all-in-one-stem-solution/) El resultado [](https://libros.catedu.es/uploads/images/gallery/2024-12/2024-12-21-09-18-26.png) Un programa más elaborado lo tienes pinchando en el primer tutorial [](https://libros.catedu.es/uploads/images/gallery/2024-12/2024-12-22-08-57-57-192-168-1-46-raspberrypi-realvnc-viewer.png) Fuente: Tutorial Learning Crowpi2 Al abrir, nos encontramos la ruta del programa **SimpleCamTest.py** (1) lo abrimos (2) y nos fijamos en la explicaciones del código del tutorial (3) [](https://libros.catedu.es/uploads/images/gallery/2024-12/2024-12-22-08-58-58-192-168-1-46-raspberrypi-realvnc-viewer.png) El resultado es el mismo pero el programa es más elaborado con la ventaja de que está explicado paso a paso en el tutorial. ##### **Programa educativo Crowpi AI- Face Recognition- Reconocimiento facial** Un programa sencillo sería ``` import numpy as np import cv2 faceCascade=cv2.CascadeClassifier('/home/pi/Documents/Face_recognition/Cascades/haarcascade_frontalface_default.xml') cap = cv2.VideoCapture(0) cap.set(3,640) cap.set(4,480) while(True): ret, img = cap.read() gray = cv2.cvtColor(img, cv2.COLOR_BGR2GRAY) faces=faceCascade.detectMultiScale( gray, scaleFactor=1.2, minNeighbors=5, minSize=(20,20) ) for (x,y,w,h) in faces: cv2.rectangle(img,(x,y),(x+w,y+h),(255,0,0),2) roi_gray=gray[y:y+h, x:x+w] roi_color=img[y:y+h, x:x+w] cv2.imshow('video', img) k=cv2.waitKey(30) & 0xff if k == 27: break cap.release() cv2.destroyAllWindows() ``` Extraído de [https://peppe8o.com/crowpi2-reviewing-the-famous-all-in-one-stem-solution/](https://peppe8o.com/crowpi2-reviewing-the-famous-all-in-one-stem-solution/) El resultado [](https://libros.catedu.es/uploads/images/gallery/2024-12/2024-12-21-09-25-31-192-168-1-46-raspberrypi-realvnc-viewer.png) Un programa más elaborado es el que sale en su tutorial en este botón: [](https://libros.catedu.es/uploads/images/gallery/2024-12/2024-12-22-10-04-32-192-168-1-46-raspberrypi-realvnc-viewer.png) Fuente: Tutorial Learning Crowpi2 El programa **faceDection.py** esta en este directorio [](https://libros.catedu.es/uploads/images/gallery/2024-12/2024-12-22-10-05-38-192-168-1-46-raspberrypi-realvnc-viewer.png) Es importante que esté en el mismo sitio que la carpeta **Cascades** tal y como explica en (1) **Cascades** es una carpeta que contendrá los patrones de las caras. Como dice su tutorial (2) puedes descargarlos desde [https://github.com/opencv/opencv/tree/master/data/haarcascades](https://github.com/opencv/opencv/tree/master/data/haarcascades) y ponerlos en la carpeta Cascades. El programa se explica paso a paso en el tutorial (3) [](https://libros.catedu.es/uploads/images/gallery/2024-12/2024-12-22-10-09-00-192-168-1-46-raspberrypi-realvnc-viewer.png) ##### **Programa educativo Crowpi AI- Face Recognition- 01-Data collection** Entramos en el siguiente tutorial **[](https://libros.catedu.es/uploads/images/gallery/2024-12/2024-12-22-10-24-49-192-168-1-46-raspberrypi-realvnc-viewer.png)** Fuente: Tutorial Learning Crowpi2 En el mismo directorio que antes encontramos **FacialRecognitionProyect**, nos encontramos el siguiente programa **01\_face\_dataset.py** ``` '''' Capture multiple Faces from multiple users to be stored on a DataBase (dataset directory) ==> Faces will be stored on a directory: dataset/ (if does not exist, pls create one) ==> Each face will have a unique numeric integer ID as 1, 2, 3, etc Based on original code by Anirban Kar: https://github.com/thecodacus/Face-Recognition Developed by Marcelo Rovai - MJRoBot.org @ 21Feb18 ''' import cv2 import os cam = cv2.VideoCapture(0) cam.set(3, 640) # set video width cam.set(4, 480) # set video height face_detector = cv2.CascadeClassifier('haarcascade_frontalface_default.xml') # For each person, enter one numeric face id face_id = input('\n enter user id end pressEl programa siguiente es 03\_face\_recognition\_RGB.py **NO FUNCIONA**

El programa visualiza por la matriz 8x8 RGB LED la cara alegre o triste pero se necesita esta librería y da error from rpi\_ws281x import PixelStrip, Color si sabes cómo solucionar este problema, ponte en contacto con Catedu www.catedu.es - información

**El programa anterior 03\_face\_recognition\_RGB.py NO FUNCIONA** cuando detecta una cara nos da el siguiente error si sabes cómo solucionar este problema, ponte en contacto con Catedu www.catedu.es - información

``` Exception in thread Thread-431: Traceback (most recent call last): File "/usr/lib/python3.7/threading.py", line 917, in _bootstrap_inner self.run() etc.. ``` **La solución es no utilizarlo y utilizar el fichero 03\_face\_recognition.py** que sí que funciona. **¿Cómo?** En las líneas 58-59-60 meter qué queremos que haga el hardware del Crowpi cuando detecte una cara: ``` if (confidence < 70): id = names[id] confidence = " {0}%".format(round(100 - confidence)) ``` Para ello creamos una función, por ejemplo lo he llamado **Hardware(id)** que haga lo que queremos que haga cuando detecte la cara id, por ejemplo si detecta la cara id=3 que suene el vibrador ``` import RPi.GPIO as GPIO import time # define vibration pin vibration_pin = 27 # Set board mode to GPIO.BCM GPIO.setmode(GPIO.BCM) # Setup vibration pin to OUTPUT GPIO.setup(vibration_pin, GPIO.OUT) def Hardware(id): nombre=names[id] if (id==3): # turn on vibration GPIO.output(vibration_pin, GPIO.HIGH) # wait half a second time.sleep(0.5) # turn off vibration GPIO.output(vibration_pin, GPIO.LOW) ``` Por lo tanto en el fichero 03\_face\_recognition.py y en las líneas 58-59-60 metemos Hardware(id) ``` if (confidence < 70): # aquí pongo qué quiero que haga cuando detecta una cara conocida Hardware(id) # fin id = names[id] confidence = " {0}%".format(round(100 - confidence)) ``` El resultado es: El fichero modificado es ``` '''' Real Time Face Recogition ==> Each face stored on dataset/ dir, should have a unique numeric integer ID as 1, 2, 3, etc ==> LBPH computed model (trained faces) should be on trainer/ dir Based on original code by Anirban Kar: https://github.com/thecodacus/Face-Recognition Developed by Marcelo Rovai - MJRoBot.org @ 21Feb18 ''' import cv2 import numpy as np import os #MIO LIBRERIAS Y CONFIGURACIóN VIBRADOR import RPi.GPIO as GPIO import time # define vibration pin vibration_pin = 27 # Set board mode to GPIO.BCM GPIO.setmode(GPIO.BCM) # Setup vibration pin to OUTPUT GPIO.setup(vibration_pin, GPIO.OUT) ################################################ recognizer = cv2.face.LBPHFaceRecognizer_create() recognizer.read('trainer/trainer.yml') cascadePath = "haarcascade_frontalface_default.xml" faceCascade = cv2.CascadeClassifier(cascadePath); font = cv2.FONT_HERSHEY_SIMPLEX #iniciate id counter id = 1 # names related to ids: example ==> Marcelo: id=1, etc names = ['None', 'George Clooney', 'Javier Quintana', 'Javier', 'Z', 'W'] # Initialize and start realtime video capture cam = cv2.VideoCapture(0) cam.set(3, 640) # set video widht cam.set(4, 480) # set video height # Define min window size to be recognized as a face minW = 0.1*cam.get(3) minH = 0.1*cam.get(4) #MIO mi función cuando detecta una cara def Hardware(id): nombre=names[id] if (id==3): # turn on vibration GPIO.output(vibration_pin, GPIO.HIGH) # wait half a second time.sleep(0.5) # turn off vibration GPIO.output(vibration_pin, GPIO.LOW) while True: ret, img =cam.read() #img = cv2.flip(img, -1) # Flip vertically gray = cv2.cvtColor(img,cv2.COLOR_BGR2GRAY) faces = faceCascade.detectMultiScale( gray, scaleFactor = 1.2, minNeighbors = 5, minSize = (int(minW), int(minH)), ) for(x,y,w,h) in faces: cv2.rectangle(img, (x,y), (x+w,y+h), (0,255,0), 2) id, confidence = recognizer.predict(gray[y:y+h,x:x+w]) # Check if confidence is less them 100 ==> "0" is perfect match if (confidence < 70): #MIO aquí pongo qué quiero que haga cuando detecta una cara conocida Hardware(id) # fin id = names[id] confidence = " {0}%".format(round(100 - confidence)) else: id = "unknown" confidence = " {0}%".format(round(100 - confidence)) cv2.putText(img, str(id), (x+5,y-5), font, 1, (255,255,255), 2) # cv2.putText(img, str(confidence), (x+5,y+h-5), font, 1, (255,255,0), 1) cv2.imshow('camera',img) k = cv2.waitKey(10) & 0xff # Press 'ESC' for exiting video if k == 27: break # Do a bit of cleanup print("\n [INFO] Exiting Program and cleanup stuff") cam.release() cv2.destroyAllWindows() # MIO #cleaup GPIO GPIO.cleanup() ```